VGGT: Visual Geometry Grounded Transformer

Jianyuan Wang, Minghao Chen, Nikita Karaev, Andrea Vedaldi, Christian Rupprecht, David Novotny

2025-03-17

Summary

This paper introduces VGGT, a neural network that can figure out all the important 3D characteristics of a scene from images, like camera position, depth, and 3D point locations.

What's the problem?

Traditionally, 3D computer vision models are designed for specific tasks. They are typically constrained to single tasks. Also, they can be slow and require extra steps to refine the results.

What's the solution?

VGGT is a simple, fast, and efficient single network that can perform multiple 3D tasks at once, including estimating camera parameters, calculating depth, reconstructing point clouds, and tracking 3D points. It works with a single image, a few images, or many images.

Why it matters?

This work matters because it provides a more versatile and efficient solution for 3D computer vision, achieving state-of-the-art results in multiple tasks and also improving downstream tasks.

Abstract

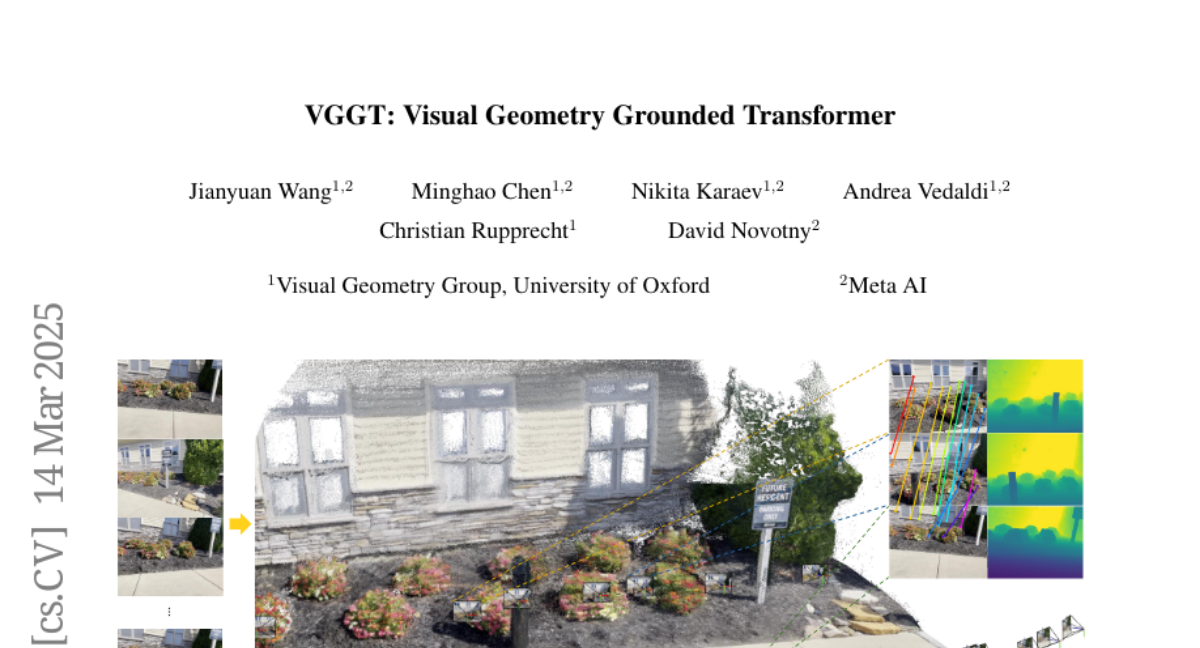

We present VGGT, a feed-forward neural network that directly infers all key 3D attributes of a scene, including camera parameters, point maps, depth maps, and 3D point tracks, from one, a few, or hundreds of its views. This approach is a step forward in 3D computer vision, where models have typically been constrained to and specialized for single tasks. It is also simple and efficient, reconstructing images in under one second, and still outperforming alternatives that require post-processing with visual geometry optimization techniques. The network achieves state-of-the-art results in multiple 3D tasks, including camera parameter estimation, multi-view depth estimation, dense point cloud reconstruction, and 3D point tracking. We also show that using pretrained VGGT as a feature backbone significantly enhances downstream tasks, such as non-rigid point tracking and feed-forward novel view synthesis. Code and models are publicly available at https://github.com/facebookresearch/vggt.