Video-Panda: Parameter-efficient Alignment for Encoder-free Video-Language Models

Jinhui Yi, Syed Talal Wasim, Yanan Luo, Muzammal Naseer, Juergen Gall

2024-12-26

Summary

This paper talks about Video-Panda, a new method for understanding videos in relation to language that doesn't require heavy encoders, making it more efficient and faster.

What's the problem?

Most current video-language models rely on large and complex encoders that require a lot of computational power and resources. This makes processing videos slow and expensive, especially when working with multi-frame videos. There's a need for a more efficient way to analyze videos without sacrificing performance.

What's the solution?

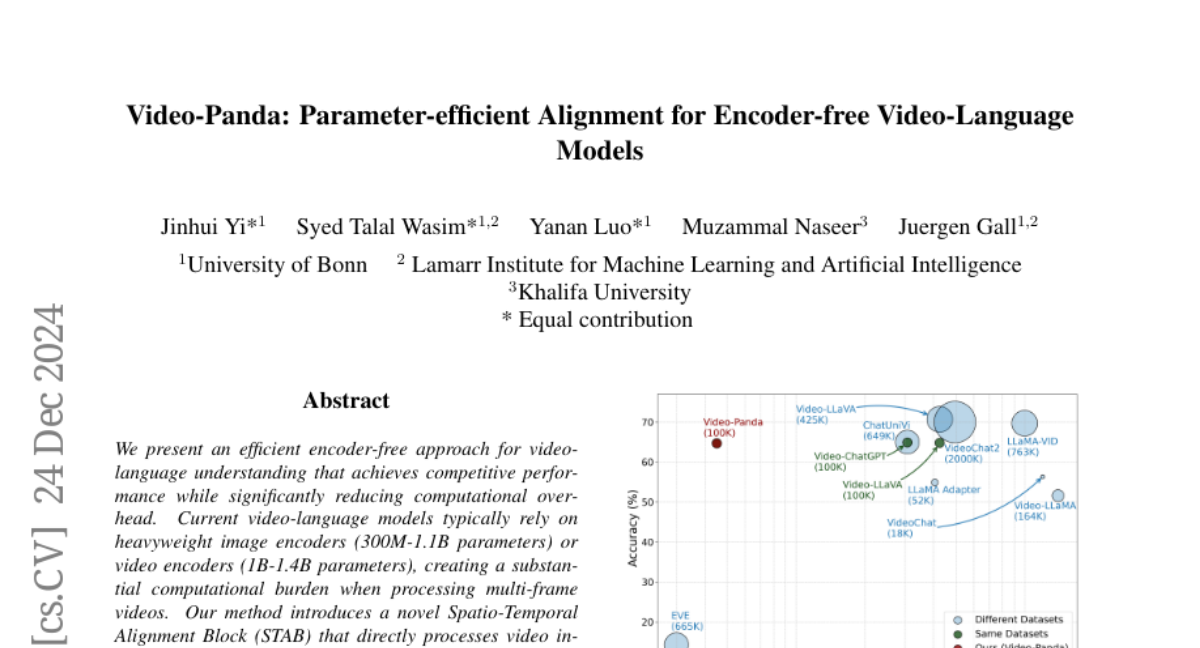

The authors introduce Video-Panda, which uses a Spatio-Temporal Alignment Block (STAB) to process video inputs directly without the need for pre-trained encoders. This method only uses 45 million parameters, which is significantly less than traditional models that use hundreds of millions. The STAB allows the model to efficiently extract important features from videos and understand the relationships between different frames. The results show that Video-Panda performs just as well or even better than existing models in tasks like answering questions about videos, while being 3 to 4 times faster.

Why it matters?

This research is important because it makes video-language processing more accessible and efficient. By reducing the computational burden, Video-Panda can help improve applications in areas like video analysis, content creation, and AI-driven video interactions, making it easier for developers to create tools that understand and generate video content.

Abstract

We present an efficient encoder-free approach for video-language understanding that achieves competitive performance while significantly reducing computational overhead. Current video-language models typically rely on heavyweight image encoders (300M-1.1B parameters) or video encoders (1B-1.4B parameters), creating a substantial computational burden when processing multi-frame videos. Our method introduces a novel Spatio-Temporal Alignment Block (STAB) that directly processes video inputs without requiring pre-trained encoders while using only 45M parameters for visual processing - at least a 6.5times reduction compared to traditional approaches. The STAB architecture combines Local Spatio-Temporal Encoding for fine-grained feature extraction, efficient spatial downsampling through learned attention and separate mechanisms for modeling frame-level and video-level relationships. Our model achieves comparable or superior performance to encoder-based approaches for open-ended video question answering on standard benchmarks. The fine-grained video question-answering evaluation demonstrates our model's effectiveness, outperforming the encoder-based approaches Video-ChatGPT and Video-LLaVA in key aspects like correctness and temporal understanding. Extensive ablation studies validate our architectural choices and demonstrate the effectiveness of our spatio-temporal modeling approach while achieving 3-4times faster processing speeds than previous methods. Code is available at https://github.com/jh-yi/Video-Panda.