VideoRepair: Improving Text-to-Video Generation via Misalignment Evaluation and Localized Refinement

Daeun Lee, Jaehong Yoon, Jaemin Cho, Mohit Bansal

2024-11-25

Summary

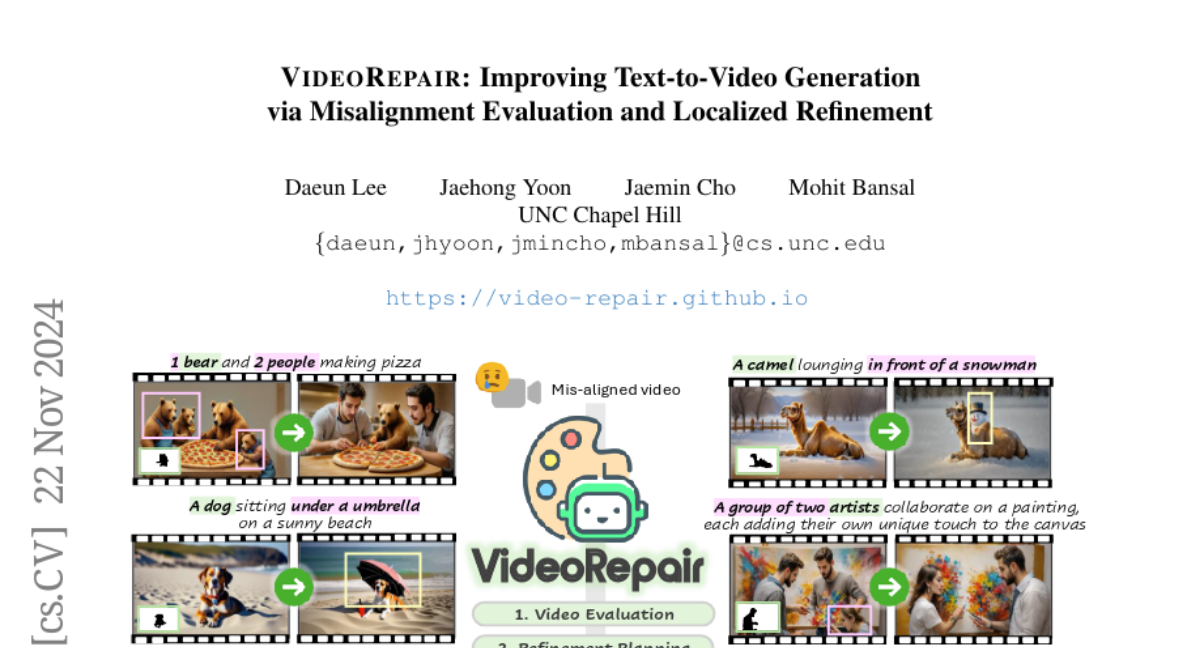

This paper introduces VideoRepair, a new system designed to improve the quality of videos generated from text descriptions by fixing mismatches between the video and the text prompts.

What's the problem?

When creating videos from text prompts, existing models often produce videos that do not accurately match the descriptions, especially when the prompts involve complex scenes with multiple objects. This misalignment can lead to videos that look unrealistic or do not convey the intended message, making it hard for users to get the results they want.

What's the solution?

VideoRepair addresses this problem by using a four-step process to identify and correct these misalignments. First, it evaluates the video to find out where it doesn't match the text prompts. Then, it plans how to refine the video by figuring out which parts are correct and which need adjustments. Next, it breaks down the video into regions to isolate areas that need fixing. Finally, it makes targeted changes to those areas while keeping the correctly generated parts intact. This method allows for precise improvements without needing extensive retraining of the model.

Why it matters?

This research is important because it enhances the ability of AI systems to generate high-quality videos that align closely with user expectations. By improving how videos are created from text prompts, VideoRepair can be useful in various applications such as filmmaking, advertising, and education, where accurate visual representation is crucial.

Abstract

Recent text-to-video (T2V) diffusion models have demonstrated impressive generation capabilities across various domains. However, these models often generate videos that have misalignments with text prompts, especially when the prompts describe complex scenes with multiple objects and attributes. To address this, we introduce VideoRepair, a novel model-agnostic, training-free video refinement framework that automatically identifies fine-grained text-video misalignments and generates explicit spatial and textual feedback, enabling a T2V diffusion model to perform targeted, localized refinements. VideoRepair consists of four stages: In (1) video evaluation, we detect misalignments by generating fine-grained evaluation questions and answering those questions with MLLM. In (2) refinement planning, we identify accurately generated objects and then create localized prompts to refine other areas in the video. Next, in (3) region decomposition, we segment the correctly generated area using a combined grounding module. We regenerate the video by adjusting the misaligned regions while preserving the correct regions in (4) localized refinement. On two popular video generation benchmarks (EvalCrafter and T2V-CompBench), VideoRepair substantially outperforms recent baselines across various text-video alignment metrics. We provide a comprehensive analysis of VideoRepair components and qualitative examples.