VideoScene: Distilling Video Diffusion Model to Generate 3D Scenes in One Step

Hanyang Wang, Fangfu Liu, Jiawei Chi, Yueqi Duan

2025-04-03

Summary

This paper is about making it faster and easier to create 3D scenes from videos using AI.

What's the problem?

Creating 3D scenes from videos is difficult, and existing AI methods are often slow and produce unrealistic results.

What's the solution?

The researchers developed a system called VideoScene that uses a video diffusion model to generate 3D scenes in one step, making it faster and more efficient.

Why it matters?

This work matters because it can make it easier to create 3D models from videos, which could be used in areas like virtual reality and game development.

Abstract

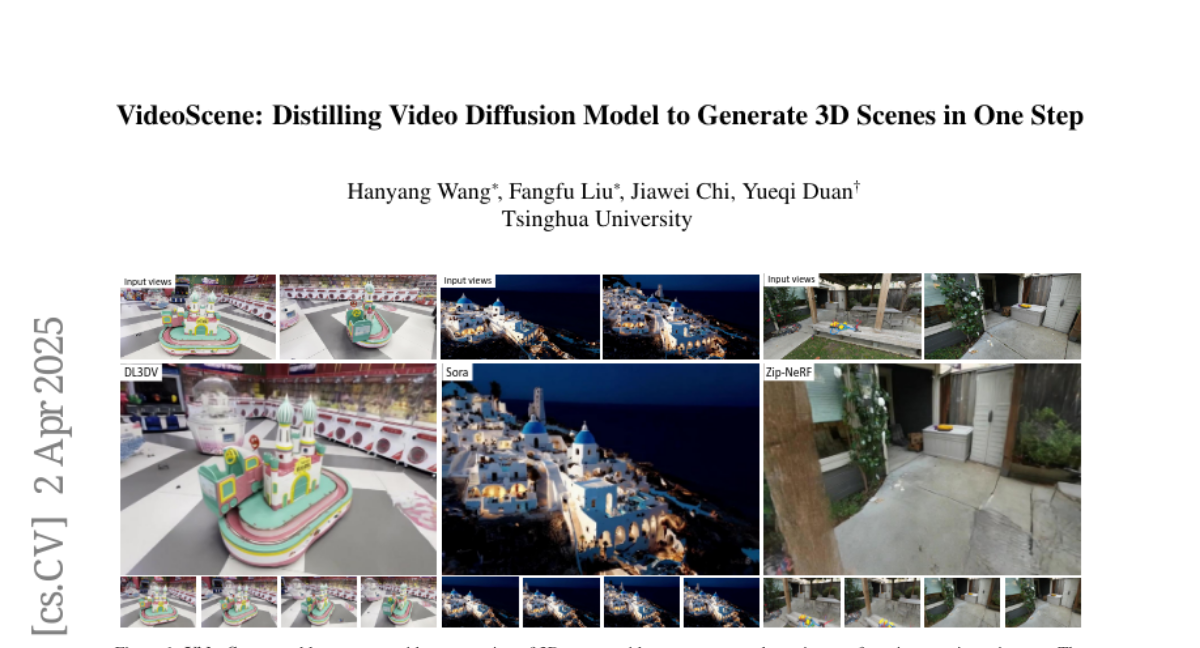

Recovering 3D scenes from sparse views is a challenging task due to its inherent ill-posed problem. Conventional methods have developed specialized solutions (e.g., geometry regularization or feed-forward deterministic model) to mitigate the issue. However, they still suffer from performance degradation by minimal overlap across input views with insufficient visual information. Fortunately, recent video generative models show promise in addressing this challenge as they are capable of generating video clips with plausible 3D structures. Powered by large pretrained video diffusion models, some pioneering research start to explore the potential of video generative prior and create 3D scenes from sparse views. Despite impressive improvements, they are limited by slow inference time and the lack of 3D constraint, leading to inefficiencies and reconstruction artifacts that do not align with real-world geometry structure. In this paper, we propose VideoScene to distill the video diffusion model to generate 3D scenes in one step, aiming to build an efficient and effective tool to bridge the gap from video to 3D. Specifically, we design a 3D-aware leap flow distillation strategy to leap over time-consuming redundant information and train a dynamic denoising policy network to adaptively determine the optimal leap timestep during inference. Extensive experiments demonstrate that our VideoScene achieves faster and superior 3D scene generation results than previous video diffusion models, highlighting its potential as an efficient tool for future video to 3D applications. Project Page: https://hanyang-21.github.io/VideoScene