ViPer: Visual Personalization of Generative Models via Individual Preference Learning

Sogand Salehi, Mahdi Shafiei, Teresa Yeo, Roman Bachmann, Amir Zamir

2024-07-25

Summary

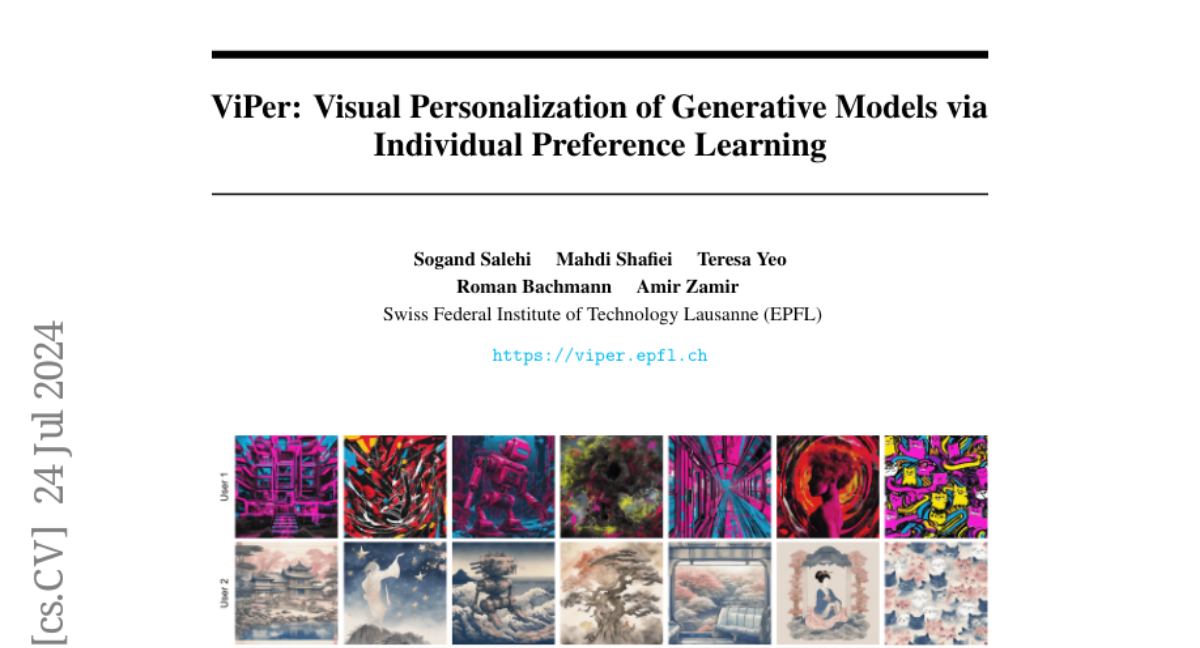

This paper presents ViPer, a method for personalizing image generation to match individual users' preferences. It allows users to influence the images created from the same prompt based on their unique tastes.

What's the problem?

Current image generation models tend to produce images that appeal to a general audience, which means they may not satisfy specific individual preferences. Users often have to manually tweak prompts multiple times to get an image they like, which is inefficient and frustrating. This lack of personalization makes it hard for people to get the exact images they want.

What's the solution?

ViPer solves this problem by first capturing a user's visual preferences through a one-time process where they comment on a small selection of images, explaining what they like or dislike about each one. Using these comments, the system identifies structured visual attributes that represent the user's preferences. These attributes are then used to guide a text-to-image model, allowing it to generate images that are more aligned with what the user wants without requiring constant adjustments to the prompts.

Why it matters?

This research is important because it enhances how generative models create images, making them more user-friendly and tailored to individual tastes. By allowing for personalized image generation, ViPer can improve user satisfaction and engagement in various applications, such as art creation, marketing, and social media.

Abstract

Different users find different images generated for the same prompt desirable. This gives rise to personalized image generation which involves creating images aligned with an individual's visual preference. Current generative models are, however, unpersonalized, as they are tuned to produce outputs that appeal to a broad audience. Using them to generate images aligned with individual users relies on iterative manual prompt engineering by the user which is inefficient and undesirable. We propose to personalize the image generation process by first capturing the generic preferences of the user in a one-time process by inviting them to comment on a small selection of images, explaining why they like or dislike each. Based on these comments, we infer a user's structured liked and disliked visual attributes, i.e., their visual preference, using a large language model. These attributes are used to guide a text-to-image model toward producing images that are tuned towards the individual user's visual preference. Through a series of user studies and large language model guided evaluations, we demonstrate that the proposed method results in generations that are well aligned with individual users' visual preferences.