Visually Interpretable Subtask Reasoning for Visual Question Answering

Yu Cheng, Arushi Goel, Hakan Bilen

2025-05-15

Summary

This paper talks about VISTAR, a new method that helps AI models answer questions about images more accurately and in a way that's easier for people to understand, by breaking down the reasoning process into clear steps.

What's the problem?

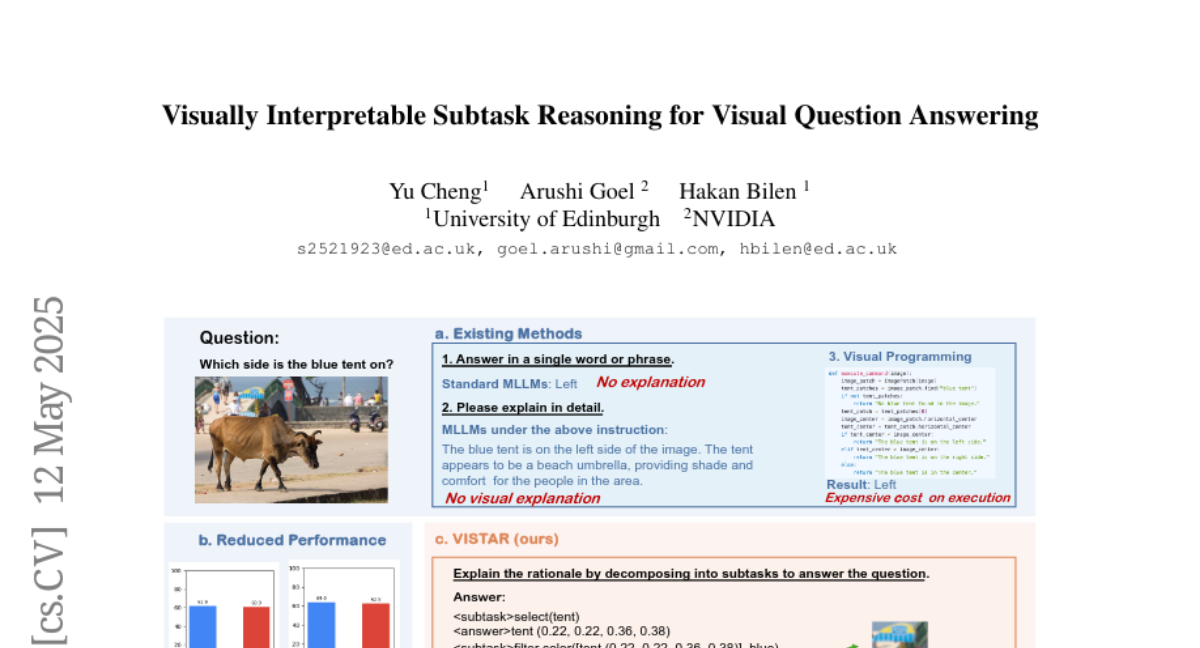

The problem is that when AI tries to answer questions about pictures, it often just gives an answer without showing how it got there, which makes it hard for people to trust or check the AI's reasoning, and sometimes the answers aren't very accurate.

What's the solution?

The researchers improved multimodal language models by teaching them to generate step-by-step explanations, or reasoning sequences, when answering visual questions. This makes the AI's thinking process more structured and transparent, which also leads to better answers.

Why it matters?

This matters because it helps people see and understand how AI arrives at its answers, making the technology more trustworthy and useful, especially in situations where accuracy and clear explanations are important, like education or medical imaging.

Abstract

VISTAR enhances multimodal large language models by generating structured reasoning sequences to improve accuracy and interpretability.