VisualWebInstruct: Scaling up Multimodal Instruction Data through Web Search

Yiming Jia, Jiachen Li, Xiang Yue, Bo Li, Ping Nie, Kai Zou, Wenhu Chen

2025-03-14

Summary

This paper talks about VisualWebInstruct, a method for creating millions of teaching examples from web images and text to train AI models that understand both pictures and words.

What's the problem?

AI struggles with tasks that need reasoning about images and text together, like solving math problems from diagrams, because there aren’t enough good training examples.

What's the solution?

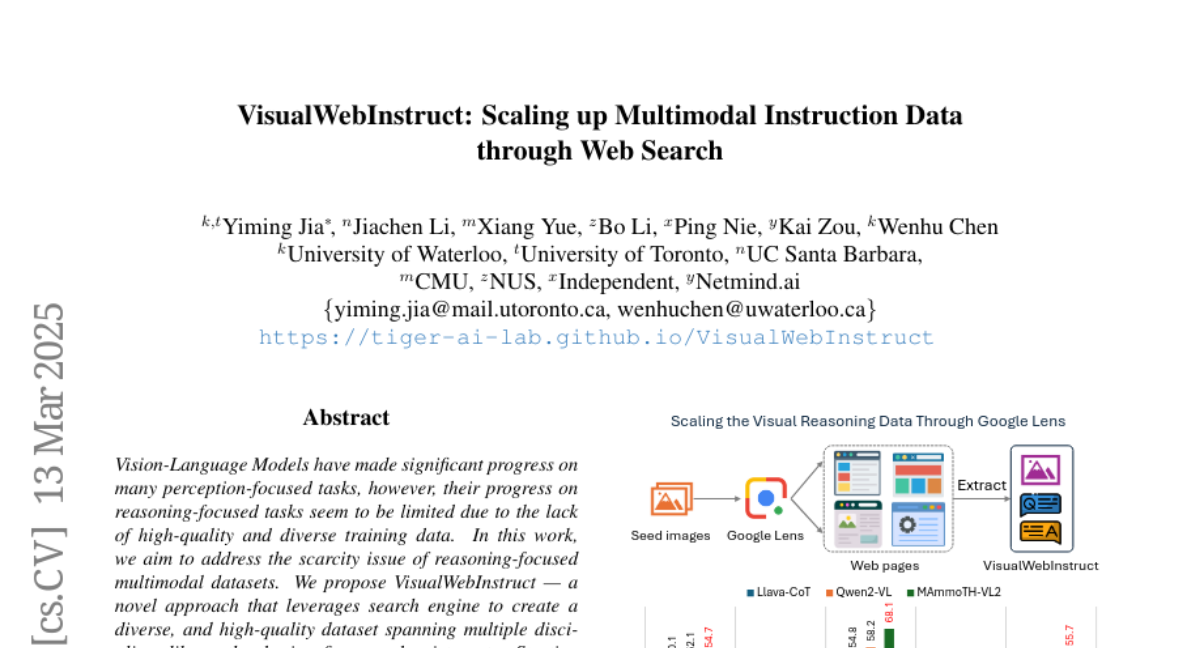

The researchers used Google Image Search to find educational images and web pages, then turned them into 900,000 question-answer pairs using AI tools like GPT-4 to teach computers step-by-step reasoning.

Why it matters?

This helps create smarter AI assistants for homework help, science projects, or real-world problem-solving by teaching them to 'think' through visual and text challenges together.

Abstract

Vision-Language Models have made significant progress on many perception-focused tasks, however, their progress on reasoning-focused tasks seem to be limited due to the lack of high-quality and diverse training data. In this work, we aim to address the scarcity issue of reasoning-focused multimodal datasets. We propose VisualWebInstruct - a novel approach that leverages search engine to create a diverse, and high-quality dataset spanning multiple disciplines like math, physics, finance, chemistry, etc. Starting with meticulously selected 30,000 seed images, we employ Google Image search to identify websites containing similar images. We collect and process the HTMLs from over 700K unique URL sources. Through a pipeline of content extraction, filtering and synthesis, we build a dataset of approximately 900K question-answer pairs, with 40% being visual QA pairs and the rest as text QA pairs. Models fine-tuned on VisualWebInstruct demonstrate significant performance gains: (1) training from Llava-OV-mid shows 10-20% absolute point gains across benchmarks, (2) training from MAmmoTH-VL shows 5% absoluate gain. Our best model MAmmoTH-VL2 shows state-of-the-art performance within the 10B parameter class on MMMU-Pro-std (40.7%), MathVerse (42.6%), and DynaMath (55.7%). These remarkable results highlight the effectiveness of our dataset in enhancing VLMs' reasoning capabilities for complex multimodal tasks.