VisuoThink: Empowering LVLM Reasoning with Multimodal Tree Search

Yikun Wang, Siyin Wang, Qinyuan Cheng, Zhaoye Fei, Liang Ding, Qipeng Guo, Dacheng Tao, Xipeng Qiu

2025-04-15

Summary

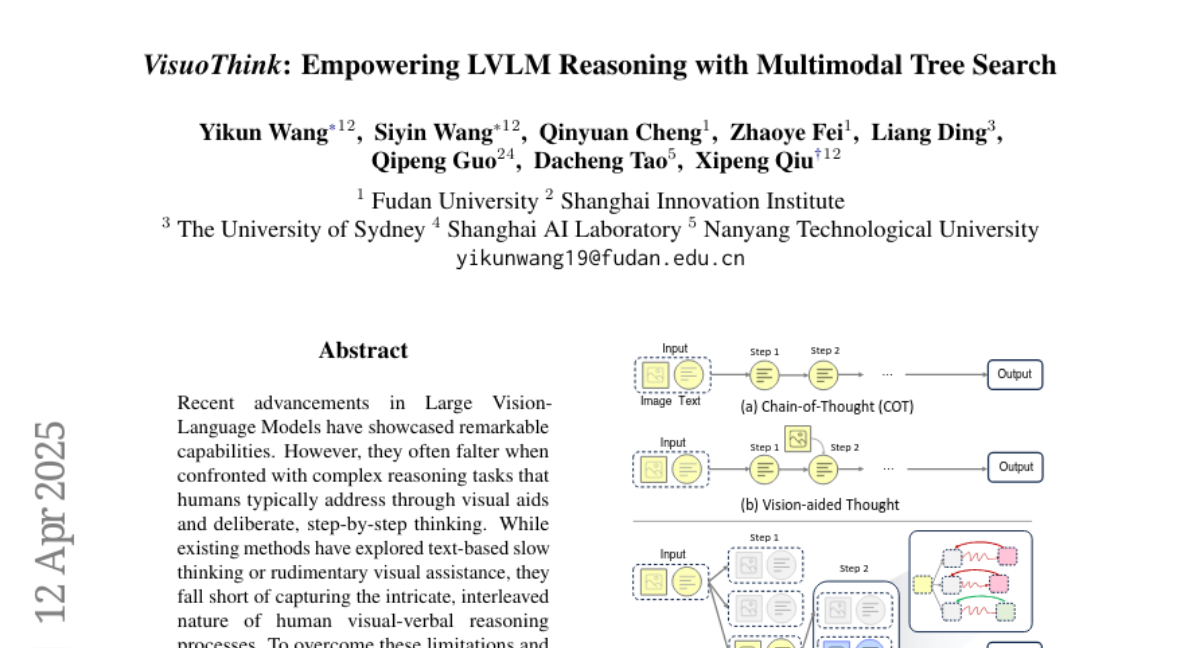

This paper talks about VisuoThink, a new AI system that helps models get better at solving problems that involve both pictures and words by using a method called multimodal tree search. VisuoThink allows the AI to break down tough questions step by step, using both visual and language information together.

What's the problem?

The problem is that most AI models struggle when they have to use both what they see and what they read to answer complicated questions or solve puzzles. They often can't connect the dots between images and text, which makes them less effective at tasks that need both types of understanding.

What's the solution?

The researchers created VisuoThink to combine visuospatial reasoning (how things are arranged in space) with language reasoning. The model uses a tree search approach, which means it explores different possible steps and solutions in a smart way, allowing it to handle more complex problems and even get better over time without needing a lot of extra training data.

Why it matters?

This work matters because it brings us closer to having AI that can truly understand and reason about the world in a way that's similar to humans, who naturally use both pictures and words to figure things out. VisuoThink could help with education, science, and any area where understanding both images and language is important.

Abstract

VisuoThink enhances visual-verbal reasoning by combining visuospatial and linguistic domains, enabling progressive reasoning and unsupervised scaling for complex tasks.