Vivid4D: Improving 4D Reconstruction from Monocular Video by Video Inpainting

Jiaxin Huang, Sheng Miao, BangBnag Yang, Yuewen Ma, Yiyi Liao

2025-04-17

Summary

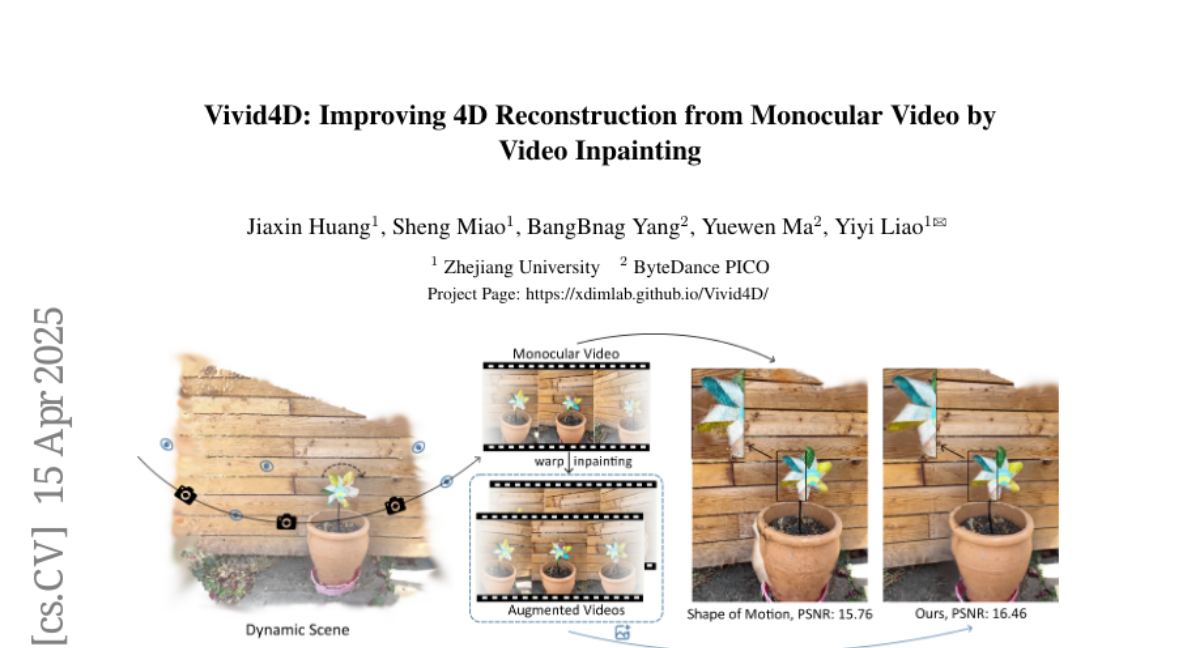

This paper talks about Vivid4D, a new technique that helps computers create detailed 4D models of scenes using just a regular video from one camera angle, by filling in missing views and information.

What's the problem?

The problem is that making a full 4D reconstruction of a scene—meaning a 3D model that changes over time—usually requires videos from many different angles. When you only have one camera, a lot of details are missing, so the computer struggles to build a complete and realistic model.

What's the solution?

The researchers combined two types of knowledge: geometric priors, which are rules about how shapes and spaces work, and generative priors, which help the computer imagine what unseen parts might look like. By using these together, their method can 'inpaint' or fill in the missing parts, allowing the computer to create multi-view videos and much better 4D reconstructions from just a single video.

Why it matters?

This matters because it makes it possible to create realistic 4D models from simple videos, which can be super helpful for things like virtual reality, special effects in movies, and studying real-world events without needing lots of expensive cameras.

Abstract

A method integrates geometric and generative priors for synthesizing multi-view videos from a single viewpoint, enhancing 4D scene reconstruction.