VLM-R1: A Stable and Generalizable R1-style Large Vision-Language Model

Haozhan Shen, Peng Liu, Jingcheng Li, Chunxin Fang, Yibo Ma, Jiajia Liao, Qiaoli Shen, Zilun Zhang, Kangjia Zhao, Qianqian Zhang, Ruochen Xu, Tiancheng Zhao

2025-04-14

Summary

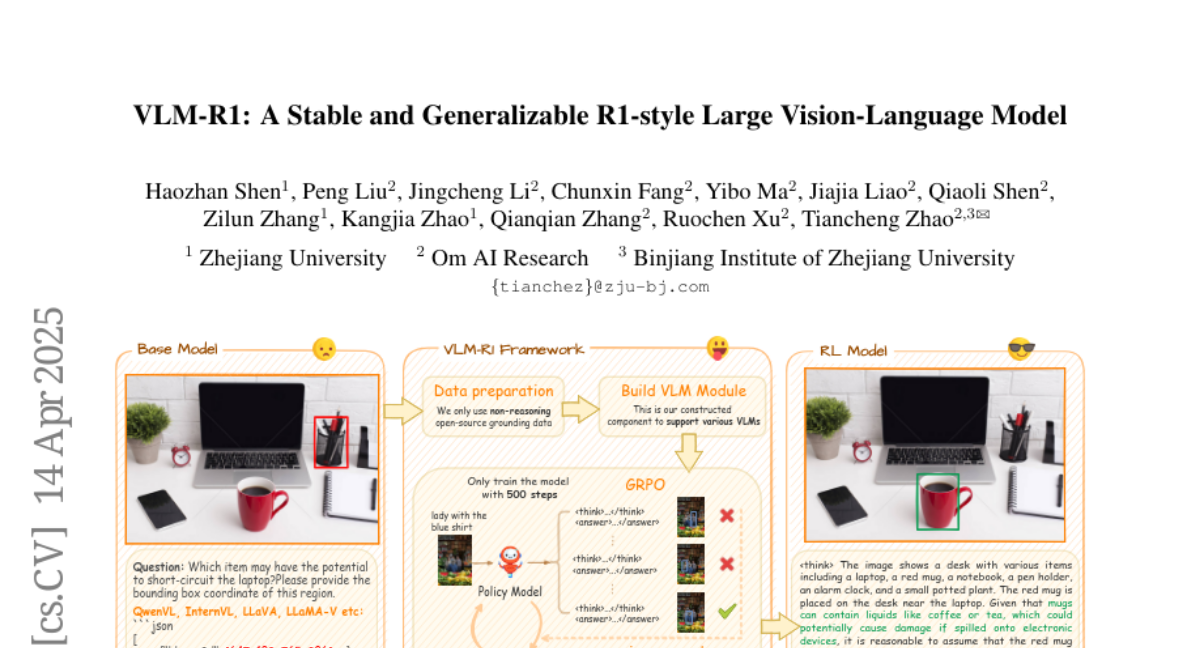

This paper talks about VLM-R1, a new way to train vision-language models (AIs that understand both pictures and text) so they can reason about images more accurately and handle a wider variety of tasks. The method uses reinforcement learning with rule-based rewards to help the model learn how to solve visual problems, like finding objects or understanding descriptions, and to generalize better to new situations.

What's the problem?

The problem is that current vision-language models often struggle with complex reasoning tasks, especially when they need to connect what they see in an image with what is described in text. Traditional training methods, like supervised fine-tuning, can improve performance but don't always help the models handle new or unfamiliar tasks. Creating high-quality training data with human feedback is also expensive and time-consuming.

What's the solution?

To solve this, the researchers designed a reinforcement learning framework that uses rule-based rewards instead of relying on human-annotated data. This system gives the AI feedback based on clear rules about whether it solved the visual task correctly, allowing the model to learn from its own results. They also introduced a method called Group Relative Policy Optimization (GRPO), which helps the model compare its different answers and learn which ones are better. By continuously updating the rules during training, the model keeps improving and avoids getting stuck on bad habits.

Why it matters?

This work matters because it makes vision-language models smarter and more flexible without needing tons of expensive human feedback. With VLM-R1, AI can better understand and reason about images in many different scenarios, making it more useful for things like searching through photos, understanding diagrams, or helping with real-world tasks that require both vision and language skills.

Abstract

A reinforcement learning framework extends the capabilities of Vision-Language Models (VLMs) in visual reasoning by leveraging rule-based reward formulations, achieving competitive performance and superior generalization compared to supervised fine-tuning.