VLog: Video-Language Models by Generative Retrieval of Narration Vocabulary

Kevin Qinghong Lin, Mike Zheng Shou

2025-03-13

Summary

This paper talks about VLog, an AI tool that describes videos by breaking them into simple everyday actions (like 'turning off an alarm') and organizing them into a smart dictionary of events.

What's the problem?

Existing AI video caption tools struggle to describe videos accurately without tons of labeled examples, and they miss important details like how actions relate (e.g., 'before' vs. 'after').

What's the solution?

VLog uses a lightweight AI (GPT-2) to create a special 'action dictionary' that groups events into categories (like kitchen activities) and learns to describe new actions on the fly, making narrations more precise.

Why it matters?

This helps create better video descriptions for things like accessibility features or automatic content tagging, making videos easier to search and understand without needing huge AI systems.

Abstract

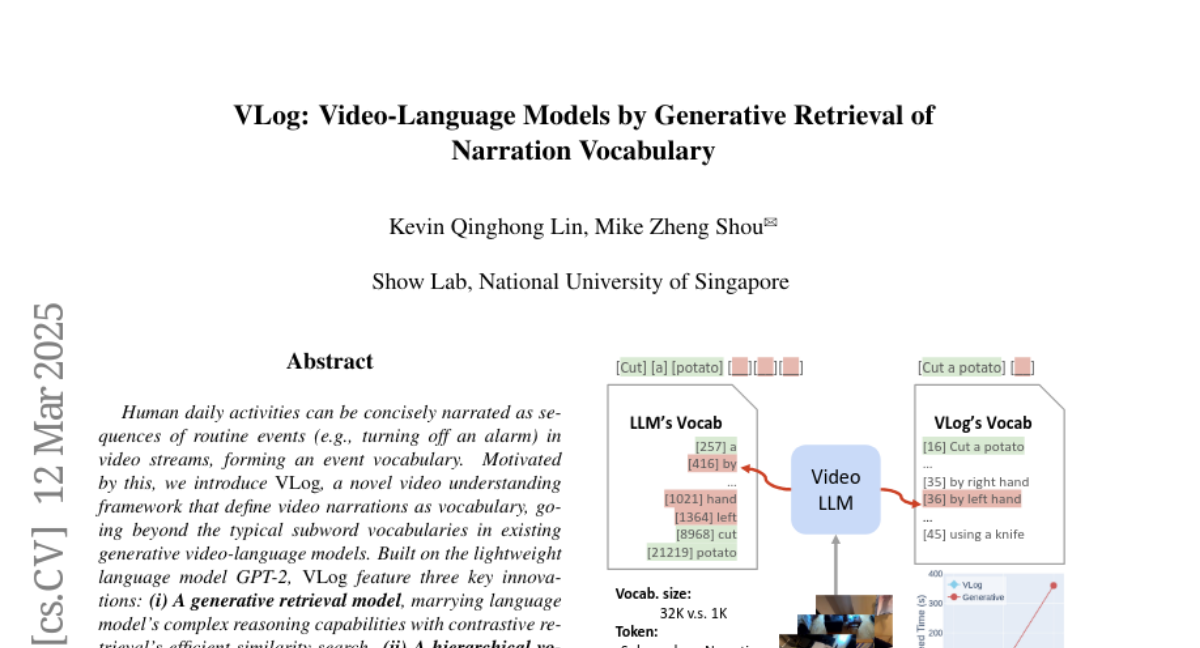

Human daily activities can be concisely narrated as sequences of routine events (e.g., turning off an alarm) in video streams, forming an event vocabulary. Motivated by this, we introduce VLog, a novel video understanding framework that define video narrations as vocabulary, going beyond the typical subword vocabularies in existing generative video-language models. Built on the lightweight language model GPT-2, VLog feature three key innovations: (i) A generative retrieval model, marrying language model's complex reasoning capabilities with contrastive retrieval's efficient similarity search. (ii) A hierarchical vocabulary derived from large-scale video narrations using our narration pair encoding algorithm, enabling efficient indexing of specific events (e.g., cutting a tomato) by identifying broader scenarios (e.g., kitchen) with expressive postfixes (e.g., by the left hand). (iii) A vocabulary update strategy leveraging generative models to extend the vocabulary for novel events encountered during inference. To validate our approach, we introduce VidCap-Eval, a development set requiring concise narrations with reasoning relationships (e.g., before and after). Experiments on EgoSchema, COIN, and HiREST further demonstrate the effectiveness of VLog, highlighting its ability to generate concise, contextually accurate, and efficient narrations, offering a novel perspective on video understanding. Codes are released at https://github.com/showlab/VLog.