VRBench: A Benchmark for Multi-Step Reasoning in Long Narrative Videos

Jiashuo Yu, Yue Wu, Meng Chu, Zhifei Ren, Zizheng Huang, Pei Chu, Ruijie Zhang, Yinan He, Qirui Li, Songze Li, Zhenxiang Li, Zhongying Tu, Conghui He, Yu Qiao, Yali Wang, Yi Wang, Limin Wang

2025-06-15

Summary

This paper talks about VRBench, a new tool made to test how well computers can understand long story videos by thinking through many steps and checking if the stories make sense logically over time.

What's the problem?

The problem is that current tests for computers to understand videos usually focus on short clips or simple questions, missing the challenge of reasoning through longer stories that need multiple steps and understanding the order and logic of events.

What's the solution?

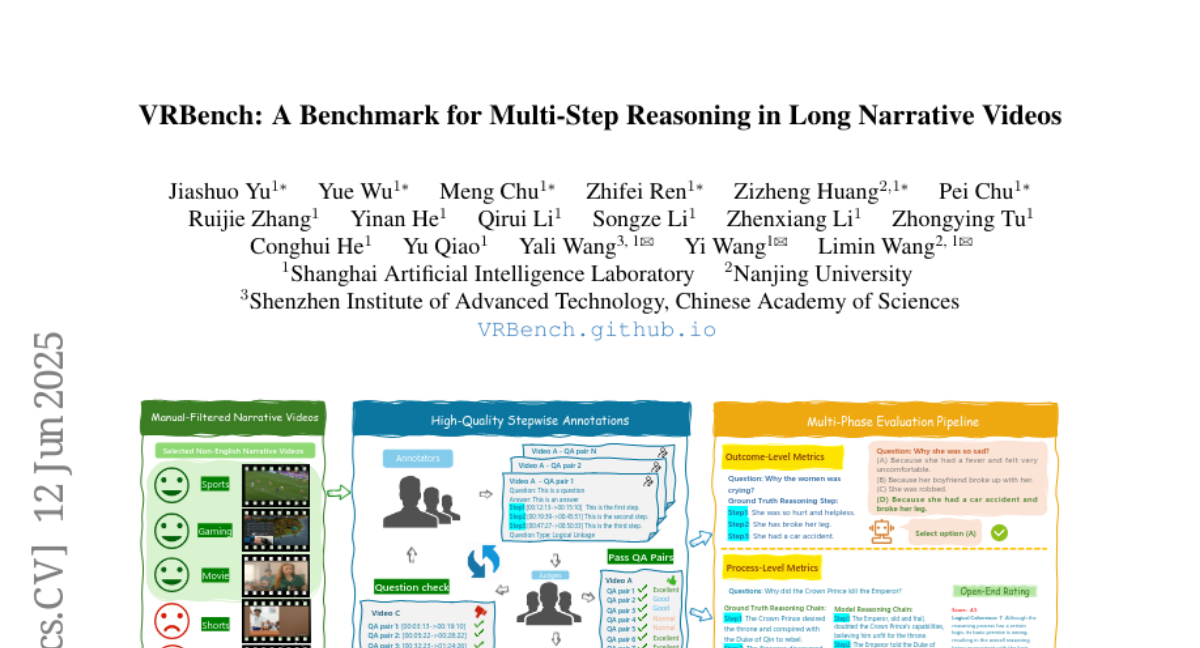

The solution was to create VRBench, which uses over a thousand long videos with carefully chosen questions that require multi-step thinking. The benchmark combines human and AI efforts to build detailed chains of reasoning, checking both the final answers and how the reasoning happens step-by-step to better evaluate the computer's understanding.

Why it matters?

This matters because making computers better at understanding long and complex videos helps improve many AI applications like video analysis, storytelling, education, and anything where deep understanding of events over time is needed.

Abstract

VRBench is a long narrative video benchmark designed to evaluate models' multi-step reasoning and procedural validity through human-labeled question-answering pairs and a human-AI collaborative framework with a multi-phase evaluation pipeline.