WALL-E 2.0: World Alignment by NeuroSymbolic Learning improves World Model-based LLM Agents

Siyu Zhou, Tianyi Zhou, Yijun Yang, Guodong Long, Deheng Ye, Jing Jiang, Chengqi Zhang

2025-04-23

Summary

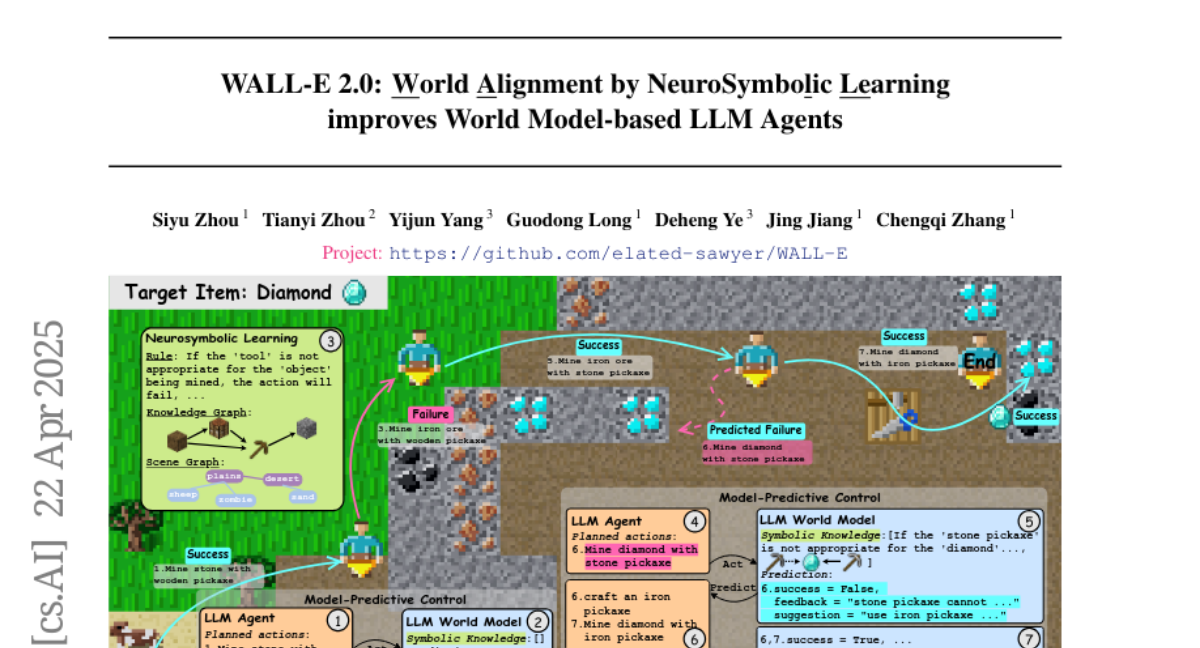

This paper introduces WALL-E 2.0, a new system that helps large language models (LLMs) better understand and interact with the world by combining two approaches: using symbols (like logic or rules) and learning from data. The goal is to make AI agents smarter and more adaptable in new situations.

What's the problem?

The main problem is that current LLM agents often struggle to perform well in unfamiliar environments because they lack a deep understanding of how the world works. They can generate text or answer questions, but when it comes to making decisions or planning in new situations, they often make mistakes or act inefficiently.

What's the solution?

WALL-E 2.0 solves this by merging symbolic knowledge, which is like giving the AI a set of rules or facts about the world, with model-based planning, which means the AI can think ahead and predict what will happen if it takes certain actions. By learning both from examples and from these rules, the AI becomes better at making decisions and adapting to new environments.

Why it matters?

This matters because it helps AI agents become more reliable and effective in real-world tasks, especially when they face new or unexpected challenges. Improving world understanding and planning in LLMs could lead to smarter virtual assistants, better robots, and more trustworthy AI systems overall.

Abstract

World alignment and model-based agent WALL-E 2.0 enhance LLMs' performance as world models in new environments by integrating symbolic knowledge and efficient planning.