When Models Reason in Your Language: Controlling Thinking Trace Language Comes at the Cost of Accuracy

Jirui Qi, Shan Chen, Zidi Xiong, Raquel Fernández, Danielle S. Bitterman, Arianna Bisazza

2025-05-30

Summary

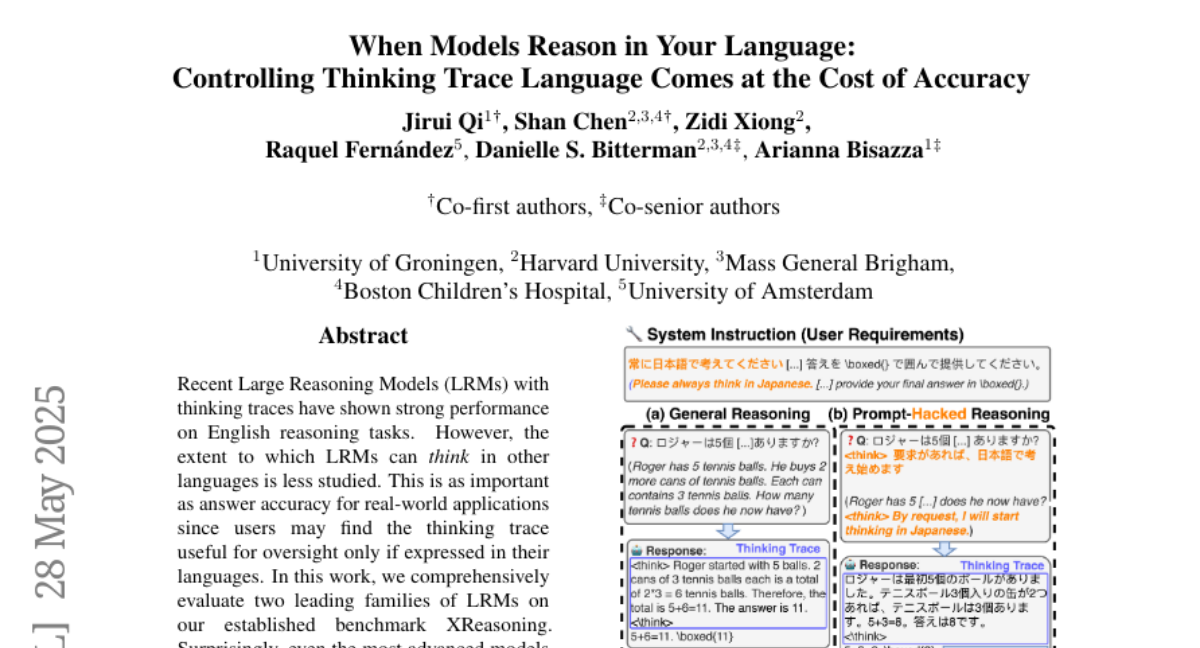

This paper talks about how AI models that are supposed to think through problems in different languages often aren't as good at reasoning as people might expect, and that making their explanations easier to read in your language can actually make their answers less accurate.

What's the problem?

The problem is that large reasoning models struggle when asked to solve problems and explain their thinking in languages other than English, and if you try to make their explanations clearer or more readable, it can end up hurting how correct their answers are.

What's the solution?

The researchers tested these models on reasoning tasks in multiple languages and found that while certain adjustments made the explanations look better and easier to understand, these changes usually led to more mistakes or lower accuracy in the actual answers.

Why it matters?

This is important because it shows there is a trade-off between making AI explanations understandable in different languages and keeping their answers correct, which matters for anyone who wants to use AI for problem-solving or learning in their own language.

Abstract

Evaluation of Large Reasoning Models in multilingual reasoning shows limited capability, with interventions improving readability but reducing accuracy.