When Words Outperform Vision: VLMs Can Self-Improve Via Text-Only Training For Human-Centered Decision Making

Zhe Hu, Jing Li, Yu Yin

2025-03-26

Summary

This paper is about how AI that makes decisions based on both images and text sometimes does better when it only uses text, especially when it needs to understand human needs.

What's the problem?

AI that uses both images and text can struggle to make good decisions in situations that require understanding people, because the images might confuse the AI or distract it from the important information in the text.

What's the solution?

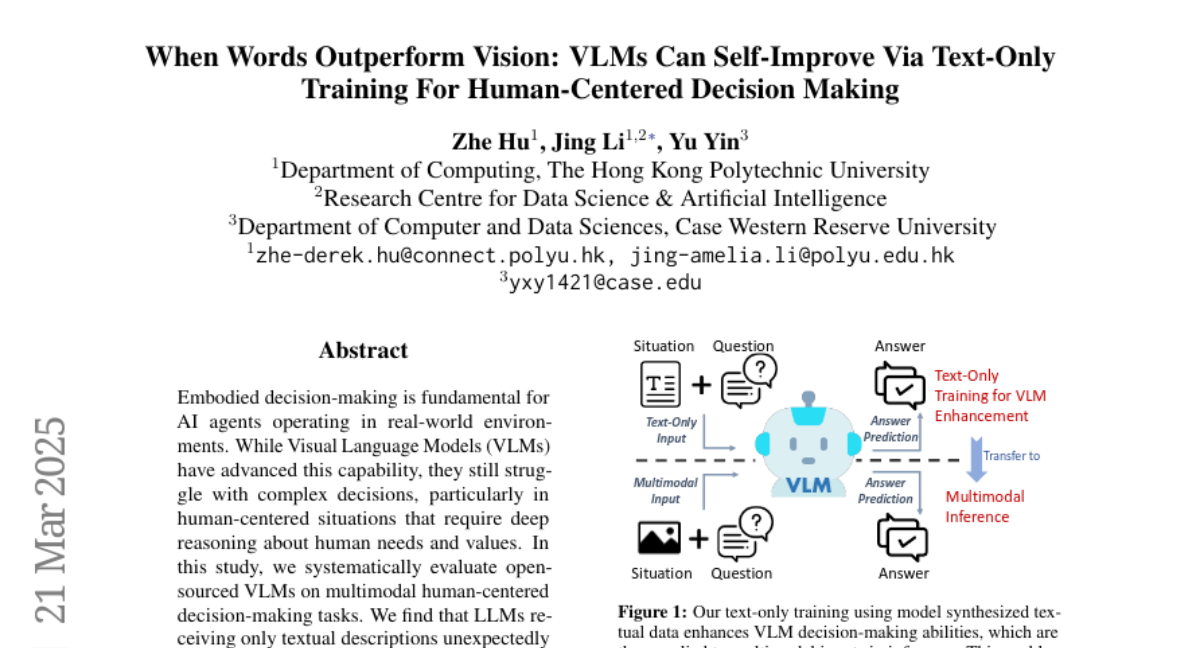

The researchers found that they could improve the AI's performance by training it only on text descriptions of situations. This helped the AI focus on the important language and reasoning skills, which then transferred to better performance even when using images.

Why it matters?

This work matters because it suggests a new way to improve AI's ability to make decisions in complex, human-centered situations, which could be useful for things like creating helpful robots or improving online communication.

Abstract

Embodied decision-making is fundamental for AI agents operating in real-world environments. While Visual Language Models (VLMs) have advanced this capability, they still struggle with complex decisions, particularly in human-centered situations that require deep reasoning about human needs and values. In this study, we systematically evaluate open-sourced VLMs on multimodal human-centered decision-making tasks. We find that LLMs receiving only textual descriptions unexpectedly outperform their VLM counterparts of similar scale that process actual images, suggesting that visual alignment may hinder VLM abilities. To address this challenge, we propose a novel text-only training approach with synthesized textual data. This method strengthens VLMs' language components and transfers the learned abilities to multimodal inference, eliminating the need for expensive image-text paired data. Furthermore, we show that VLMs can achieve substantial performance gains through self-improvement, using training data generated by their LLM counterparts rather than relying on larger teacher models like GPT-4. Our findings establish a more efficient and scalable approach to enhancing VLMs' human-centered decision-making capabilities, opening new avenues for optimizing VLMs through self-improvement mechanisms.