Where do Large Vision-Language Models Look at when Answering Questions?

Xiaoying Xing, Chia-Wen Kuo, Li Fuxin, Yulei Niu, Fan Chen, Ming Li, Ying Wu, Longyin Wen, Sijie Zhu

2025-03-21

Summary

This paper is about understanding how AI models that can see and understand language (like those used in chatbots with image recognition) use images when answering questions.

What's the problem?

We don't really know which parts of an image these AI models focus on when they answer questions about it. It's hard to tell what they're 'looking' at.

What's the solution?

The researchers used a method to highlight the important parts of the image that the AI is using to answer questions. This helps us see what the AI is paying attention to.

Why it matters?

This work matters because it helps us understand how these AI models work and if they're actually 'seeing' the image in a meaningful way when answering questions.

Abstract

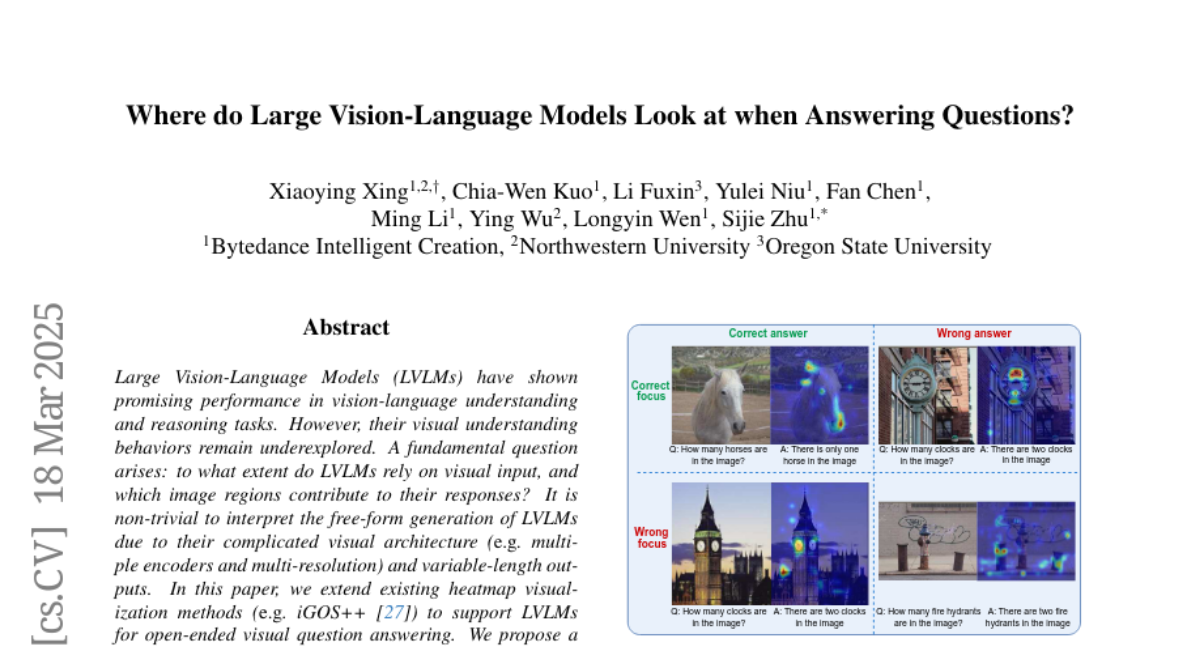

Large Vision-Language Models (LVLMs) have shown promising performance in vision-language understanding and reasoning tasks. However, their visual understanding behaviors remain underexplored. A fundamental question arises: to what extent do LVLMs rely on visual input, and which image regions contribute to their responses? It is non-trivial to interpret the free-form generation of LVLMs due to their complicated visual architecture (e.g., multiple encoders and multi-resolution) and variable-length outputs. In this paper, we extend existing heatmap visualization methods (e.g., iGOS++) to support LVLMs for open-ended visual question answering. We propose a method to select visually relevant tokens that reflect the relevance between generated answers and input image. Furthermore, we conduct a comprehensive analysis of state-of-the-art LVLMs on benchmarks designed to require visual information to answer. Our findings offer several insights into LVLM behavior, including the relationship between focus region and answer correctness, differences in visual attention across architectures, and the impact of LLM scale on visual understanding. The code and data are available at https://github.com/bytedance/LVLM_Interpretation.