WikiAutoGen: Towards Multi-Modal Wikipedia-Style Article Generation

Zhongyu Yang, Jun Chen, Dannong Xu, Junjie Fei, Xiaoqian Shen, Liangbing Zhao, Chun-Mei Feng, Mohamed Elhoseiny

2025-03-26

Summary

This paper is about using AI to automatically create Wikipedia-style articles that include both text and images.

What's the problem?

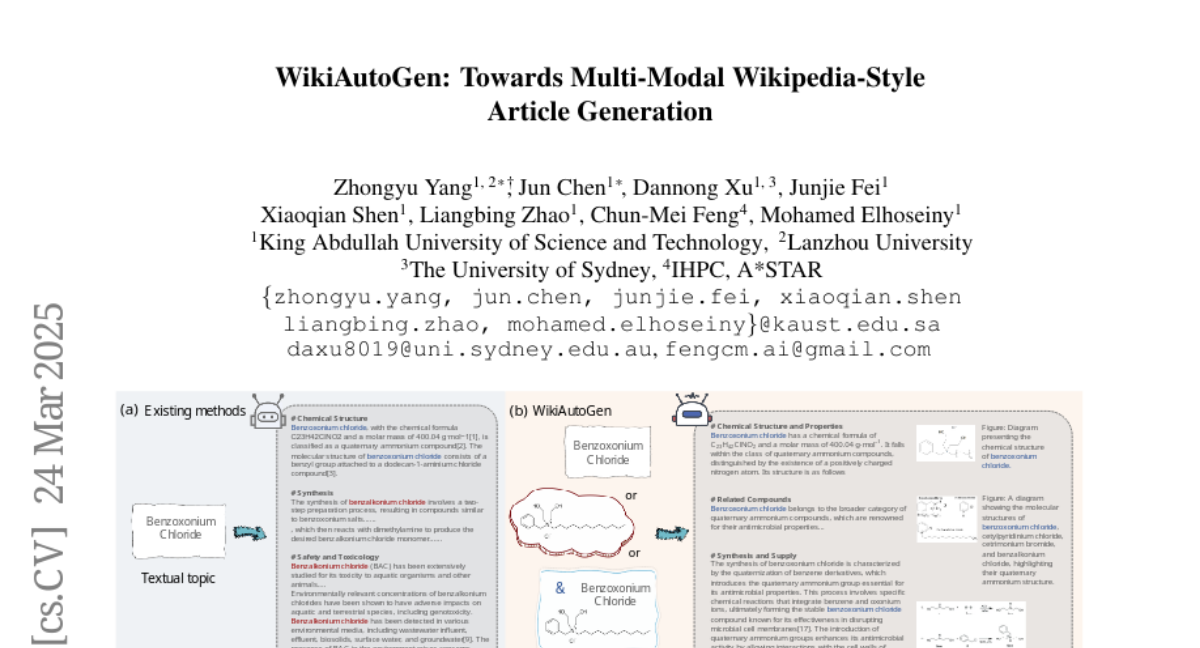

Creating high-quality Wikipedia articles takes a lot of time and effort from human editors. Current AI methods can generate text, but they often don't include images, which makes the articles less informative and engaging.

What's the solution?

The researchers developed a new AI system called WikiAutoGen that can automatically find and include relevant images in the articles it generates. It also uses a self-reflection mechanism to make sure the information is accurate and comprehensive.

Why it matters?

This work matters because it can help automate the creation of Wikipedia articles, making it easier to access and share knowledge.

Abstract

Knowledge discovery and collection are intelligence-intensive tasks that traditionally require significant human effort to ensure high-quality outputs. Recent research has explored multi-agent frameworks for automating Wikipedia-style article generation by retrieving and synthesizing information from the internet. However, these methods primarily focus on text-only generation, overlooking the importance of multimodal content in enhancing informativeness and engagement. In this work, we introduce WikiAutoGen, a novel system for automated multimodal Wikipedia-style article generation. Unlike prior approaches, WikiAutoGen retrieves and integrates relevant images alongside text, enriching both the depth and visual appeal of generated content. To further improve factual accuracy and comprehensiveness, we propose a multi-perspective self-reflection mechanism, which critically assesses retrieved content from diverse viewpoints to enhance reliability, breadth, and coherence, etc. Additionally, we introduce WikiSeek, a benchmark comprising Wikipedia articles with topics paired with both textual and image-based representations, designed to evaluate multimodal knowledge generation on more challenging topics. Experimental results show that WikiAutoGen outperforms previous methods by 8%-29% on our WikiSeek benchmark, producing more accurate, coherent, and visually enriched Wikipedia-style articles. We show some of our generated examples in https://wikiautogen.github.io/ .