WildIFEval: Instruction Following in the Wild

Gili Lior, Asaf Yehudai, Ariel Gera, Liat Ein-Dor

2025-03-13

Summary

This paper talks about WildIFEval, a test to check how well AI chatbots follow complex user instructions with multiple rules, using real-world examples where people ask for things like specific lengths, formats, or content styles.

What's the problem?

AI models struggle when users give them tricky instructions with lots of specific rules, like 'write a poem about cats, make it funny, and keep it under 10 lines,' because they often miss some parts of the request.

What's the solution?

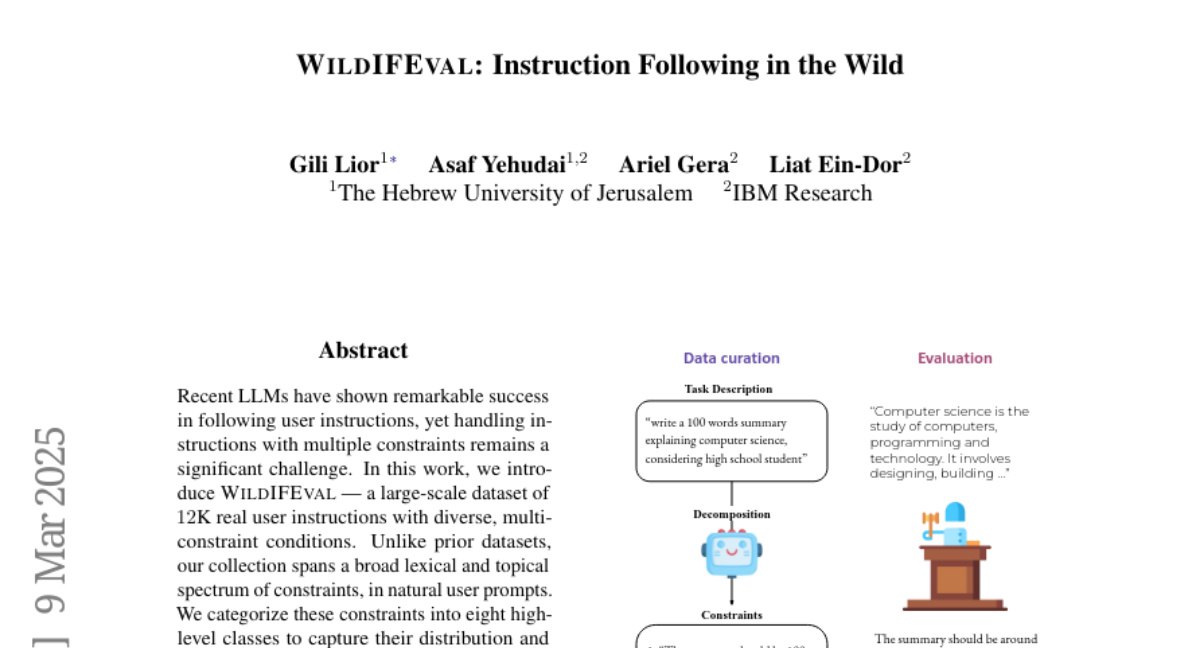

The researchers built a huge dataset of real user instructions with multiple rules, broke them into categories like 'length' or 'format,' and tested AI models to see where they fail, helping identify areas for improvement.

Why it matters?

This helps make AI assistants better at handling real-world tasks, like writing emails or stories, by teaching them to follow all parts of a user's request without dropping important details.

Abstract

Recent LLMs have shown remarkable success in following user instructions, yet handling instructions with multiple constraints remains a significant challenge. In this work, we introduce WildIFEval - a large-scale dataset of 12K real user instructions with diverse, multi-constraint conditions. Unlike prior datasets, our collection spans a broad lexical and topical spectrum of constraints, in natural user prompts. We categorize these constraints into eight high-level classes to capture their distribution and dynamics in real-world scenarios. Leveraging WildIFEval, we conduct extensive experiments to benchmark the instruction-following capabilities of leading LLMs. Our findings reveal that all evaluated models experience performance degradation with an increasing number of constraints. Thus, we show that all models have a large room for improvement on such tasks. Moreover, we observe that the specific type of constraint plays a critical role in model performance. We release our dataset to promote further research on instruction-following under complex, realistic conditions.