WISA: World Simulator Assistant for Physics-Aware Text-to-Video Generation

Jing Wang, Ao Ma, Ke Cao, Jun Zheng, Zhanjie Zhang, Jiasong Feng, Shanyuan Liu, Yuhang Ma, Bo Cheng, Dawei Leng, Yuhui Yin, Xiaodan Liang

2025-03-18

Summary

This paper introduces WISA, a new system designed to help text-to-video AI models create videos that follow the laws of physics.

What's the problem?

Current AI models that generate videos from text often struggle to understand and accurately represent physical principles, leading to unrealistic or impossible scenarios.

What's the solution?

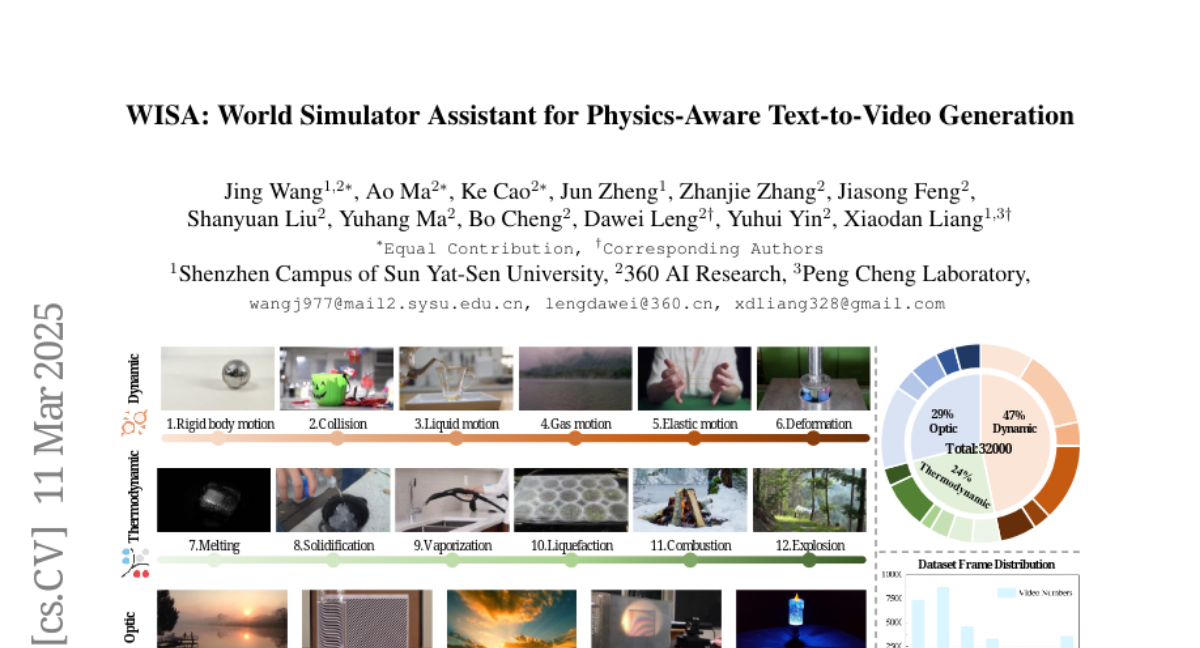

WISA breaks down physical principles into simpler components (textual descriptions, categories, and properties) and integrates them into the AI model. It uses techniques like Mixture-of-Physical-Experts Attention (MoPA) and a Physical Classifier to improve the model's understanding of physics. The researchers also created a new dataset of 32,000 videos specifically designed to teach physical laws.

Why it matters?

This work matters because it helps create more realistic and believable videos, which is important for building accurate world simulators and improving the quality of AI-generated content.

Abstract

Recent rapid advancements in text-to-video (T2V) generation, such as SoRA and Kling, have shown great potential for building world simulators. However, current T2V models struggle to grasp abstract physical principles and generate videos that adhere to physical laws. This challenge arises primarily from a lack of clear guidance on physical information due to a significant gap between abstract physical principles and generation models. To this end, we introduce the World Simulator Assistant (WISA), an effective framework for decomposing and incorporating physical principles into T2V models. Specifically, WISA decomposes physical principles into textual physical descriptions, qualitative physical categories, and quantitative physical properties. To effectively embed these physical attributes into the generation process, WISA incorporates several key designs, including Mixture-of-Physical-Experts Attention (MoPA) and a Physical Classifier, enhancing the model's physics awareness. Furthermore, most existing datasets feature videos where physical phenomena are either weakly represented or entangled with multiple co-occurring processes, limiting their suitability as dedicated resources for learning explicit physical principles. We propose a novel video dataset, WISA-32K, collected based on qualitative physical categories. It consists of 32,000 videos, representing 17 physical laws across three domains of physics: dynamics, thermodynamics, and optics. Experimental results demonstrate that WISA can effectively enhance the compatibility of T2V models with real-world physical laws, achieving a considerable improvement on the VideoPhy benchmark. The visual exhibitions of WISA and WISA-32K are available in the https://360cvgroup.github.io/WISA/.