WorldMedQA-V: a multilingual, multimodal medical examination dataset for multimodal language models evaluation

João Matos, Shan Chen, Siena Placino, Yingya Li, Juan Carlos Climent Pardo, Daphna Idan, Takeshi Tohyama, David Restrepo, Luis F. Nakayama, Jose M. M. Pascual-Leone, Guergana Savova, Hugo Aerts, Leo A. Celi, A. Ian Wong, Danielle S. Bitterman, Jack Gallifant

2024-10-17

Summary

This paper presents WorldMedQA-V, a new dataset designed to evaluate how well multimodal language models (VLMs) perform in the medical field by using both text and images in multiple languages.

What's the problem?

Current evaluation methods for VLMs in healthcare often rely on text-only datasets that are limited in language and scope. This makes it difficult to assess how well these models can handle the diverse and complex information found in real-world medical situations, especially since healthcare varies across different cultures and languages.

What's the solution?

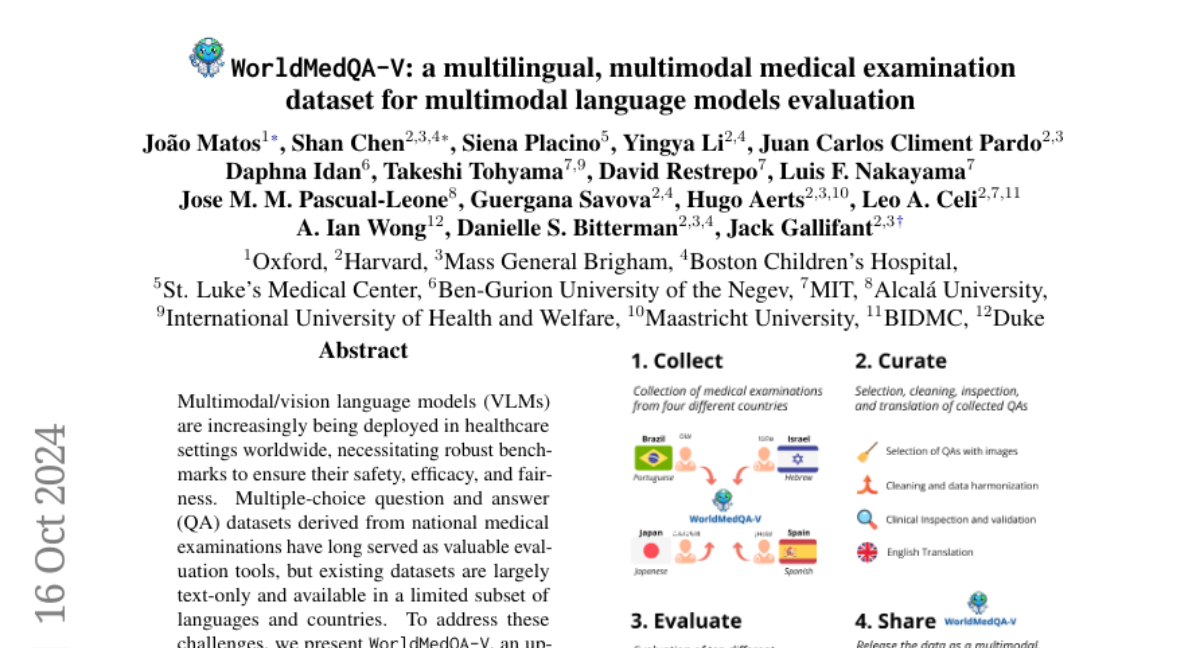

To address this issue, the authors created WorldMedQA-V, which includes 568 multiple-choice questions paired with medical images from four countries: Brazil, Israel, Japan, and Spain. This dataset is multilingual and includes both original language questions and validated English translations. By providing a more comprehensive evaluation tool, WorldMedQA-V allows for better assessment of VLMs' performance in understanding and processing medical information across different languages and formats.

Why it matters?

This research is important because it helps ensure that AI systems used in healthcare are safe, effective, and fair. By evaluating VLMs with a dataset that reflects the diversity of medical knowledge and practices around the world, WorldMedQA-V can improve the development of AI tools that assist healthcare professionals, ultimately leading to better patient care.

Abstract

Multimodal/vision language models (VLMs) are increasingly being deployed in healthcare settings worldwide, necessitating robust benchmarks to ensure their safety, efficacy, and fairness. Multiple-choice question and answer (QA) datasets derived from national medical examinations have long served as valuable evaluation tools, but existing datasets are largely text-only and available in a limited subset of languages and countries. To address these challenges, we present WorldMedQA-V, an updated multilingual, multimodal benchmarking dataset designed to evaluate VLMs in healthcare. WorldMedQA-V includes 568 labeled multiple-choice QAs paired with 568 medical images from four countries (Brazil, Israel, Japan, and Spain), covering original languages and validated English translations by native clinicians, respectively. Baseline performance for common open- and closed-source models are provided in the local language and English translations, and with and without images provided to the model. The WorldMedQA-V benchmark aims to better match AI systems to the diverse healthcare environments in which they are deployed, fostering more equitable, effective, and representative applications.