Zero-1-to-A: Zero-Shot One Image to Animatable Head Avatars Using Video Diffusion

Zhou Zhenglin, Ma Fan, Fan Hehe, Chua Tat-Seng

2025-03-21

Summary

This paper is about creating animated 3D head models from just one picture, without needing a lot of training data.

What's the problem?

Creating realistic and animatable 3D head models usually requires a ton of images and videos for the AI to learn from.

What's the solution?

The researchers developed a method that uses existing AI models and video diffusion to create a dataset that the AI can learn from, allowing it to create 3D heads from a single image.

Why it matters?

This work matters because it makes it easier to create personalized avatars for video games, virtual reality, and other applications.

Abstract

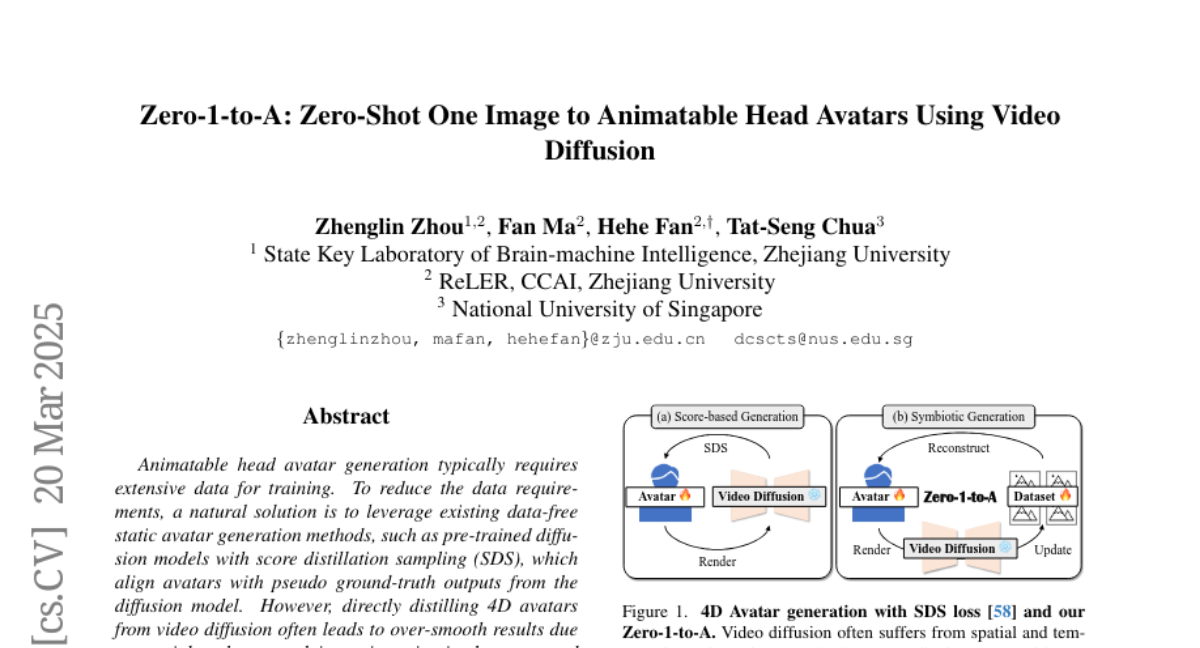

Animatable head avatar generation typically requires extensive data for training. To reduce the data requirements, a natural solution is to leverage existing data-free static avatar generation methods, such as pre-trained diffusion models with score distillation sampling (SDS), which align avatars with pseudo ground-truth outputs from the diffusion model. However, directly distilling 4D avatars from video diffusion often leads to over-smooth results due to spatial and temporal inconsistencies in the generated video. To address this issue, we propose Zero-1-to-A, a robust method that synthesizes a spatial and temporal consistency dataset for 4D avatar reconstruction using the video diffusion model. Specifically, Zero-1-to-A iteratively constructs video datasets and optimizes animatable avatars in a progressive manner, ensuring that avatar quality increases smoothly and consistently throughout the learning process. This progressive learning involves two stages: (1) Spatial Consistency Learning fixes expressions and learns from front-to-side views, and (2) Temporal Consistency Learning fixes views and learns from relaxed to exaggerated expressions, generating 4D avatars in a simple-to-complex manner. Extensive experiments demonstrate that Zero-1-to-A improves fidelity, animation quality, and rendering speed compared to existing diffusion-based methods, providing a solution for lifelike avatar creation. Code is publicly available at: https://github.com/ZhenglinZhou/Zero-1-to-A.