Zero4D: Training-Free 4D Video Generation From Single Video Using Off-the-Shelf Video Diffusion Model

Jangho Park, Taesung Kwon, Jong Chul Ye

2025-03-31

Summary

This paper talks about a new way to create 4D videos (3D videos that change over time) from just a regular video, without needing to train a special AI model.

What's the problem?

Creating 4D videos usually requires training a complex AI model on a lot of data, which is expensive and time-consuming.

What's the solution?

The researchers developed a technique that uses existing AI models to create multi-view videos from a single video, making it easier and more efficient.

Why it matters?

This work matters because it makes 4D video generation more accessible, allowing people to create immersive experiences without a lot of resources.

Abstract

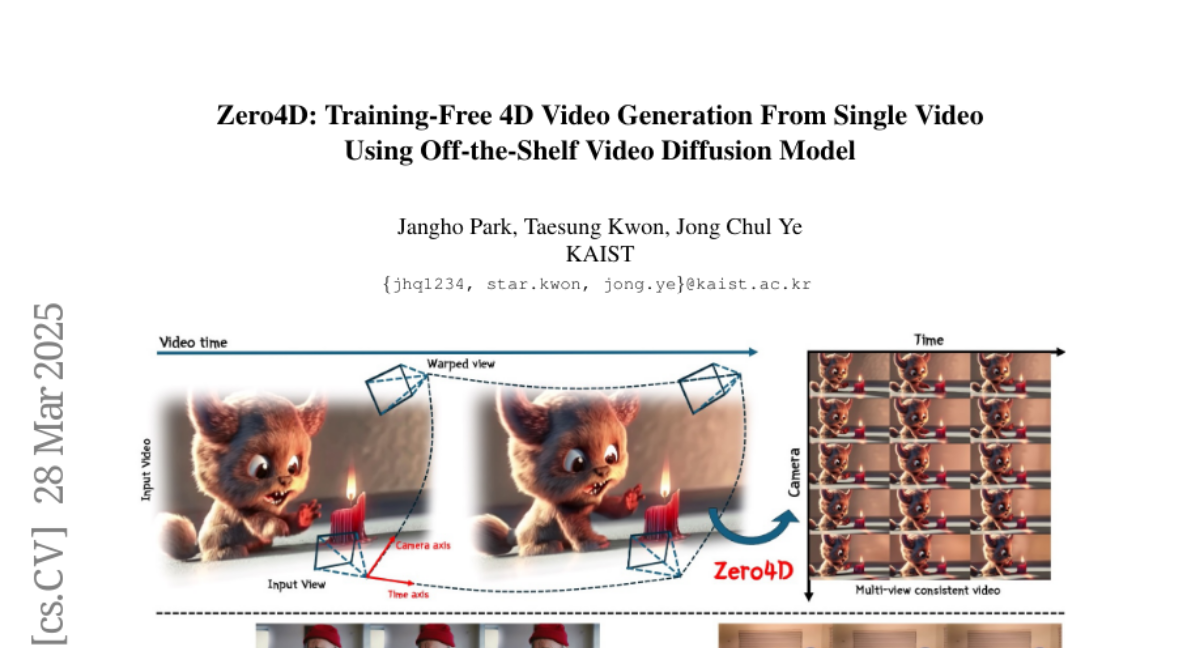

Recently, multi-view or 4D video generation has emerged as a significant research topic. Nonetheless, recent approaches to 4D generation still struggle with fundamental limitations, as they primarily rely on harnessing multiple video diffusion models with additional training or compute-intensive training of a full 4D diffusion model with limited real-world 4D data and large computational costs. To address these challenges, here we propose the first training-free 4D video generation method that leverages the off-the-shelf video diffusion models to generate multi-view videos from a single input video. Our approach consists of two key steps: (1) By designating the edge frames in the spatio-temporal sampling grid as key frames, we first synthesize them using a video diffusion model, leveraging a depth-based warping technique for guidance. This approach ensures structural consistency across the generated frames, preserving spatial and temporal coherence. (2) We then interpolate the remaining frames using a video diffusion model, constructing a fully populated and temporally coherent sampling grid while preserving spatial and temporal consistency. Through this approach, we extend a single video into a multi-view video along novel camera trajectories while maintaining spatio-temporal consistency. Our method is training-free and fully utilizes an off-the-shelf video diffusion model, offering a practical and effective solution for multi-view video generation.